A growing share of buying decisions is being shaped inside AI tools like ChatGPT, Perplexity, and Google's AI Mode and AI Overviews. Analytics platforms can't see these interactions, which leaves businesses guessing about how much of their revenue AI is actually influencing.

Your analytics aren't lying. They just can't see the AI tools shaping the decision.

This guide explains what's driving the gap, why it matters for your brand, and how to start tracking the right metrics to close it.

What is the attribution gap in AI search?

The attribution gap in AI search is the difference between what influenced a customer's decision and what your analytics platform can actually record.

Here’s an example to illustrate this:

Imagine a user asks ChatGPT to compare project management tools. ChatGPT gives them a detailed breakdown that includes your brand as a recommendation.

The user then searches for your brand on Google and clicks the top organic result. They then sign up for your tool.

Your analytics platform attributes the conversion to the click on your organic link in search results. The AI interaction that drove the decision is invisible, because the user didn’t click anything within the AI platform itself.

There are two main ways this attribution gap appears in practice:

- Invisible influence: Your brand gets surfaced in an AI-generated answer, the user reads it and forms an opinion, but never clicks through to your site. The interaction shapes the decision without creating any record of it.

- Agentic search: If an AI agent purchases a SaaS subscription or adds a product to a cart without a human ever visiting your site, the session that drove the transaction may never have existed on your end. You see the conversion, but have no information about where it occurred or what influenced it.

The result is a growing category of "dark traffic": visits and conversions whose true origin is unknown.

Why attribution has always been a challenge

Marketing attribution has always been a challenge because real buying journeys are more complicated than visiting your website and converting. There are always steps in between, outside influences, and nuances of analytics platforms that make clean attribution difficult.

Think about how people have always made decisions about significant purchases:

- They ask friends and colleagues for recommendations

- They watch YouTube reviews

- They search Reddit threads for honest opinions from people who've already bought the thing

- They see a billboard, hear a podcast ad, or notice a brand mentioned in a newsletter

None of those touchpoints show up cleanly in your analytics either.

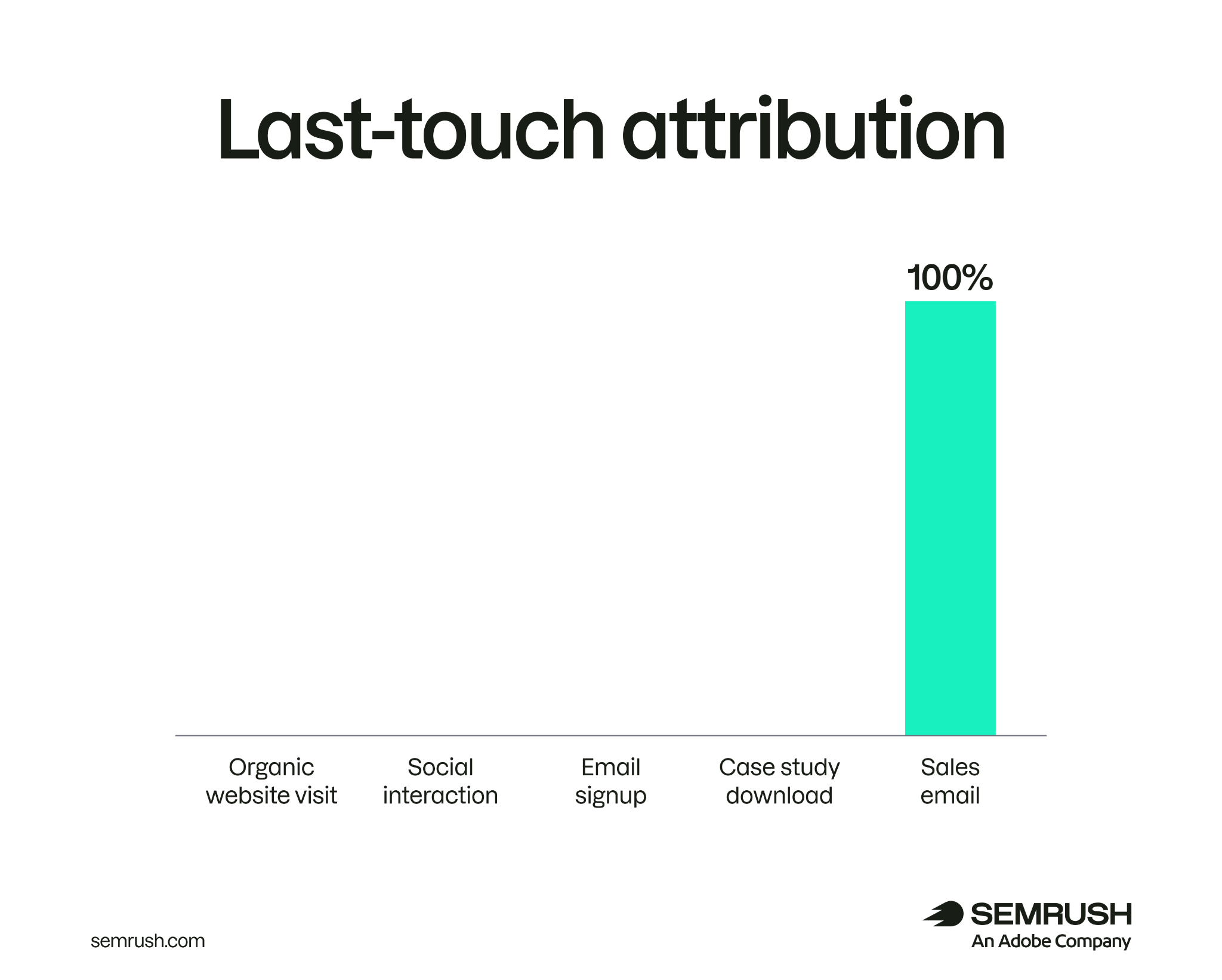

Attribution models like last-click have always been problematic because they ignore the influence of all the intermediary steps.

Platforms like Google Analytics typically use data-driven attribution models rather than last-touch, but the problem still exists. AI and agentic search have made even these more complex models insufficient.

According to a ChannelEngine report, 58% of marketplace consumers use AI tools to research products. All the transactions associated with these consumers are potentially being attributed inaccurately.

Perfect attribution in marketing has never existed. But what's new isn't that attribution is imperfect. It's that AI search creates entire categories of influence that leave no record at all, not even a sloppy one.

How agentic search breaks the funnel

Agentic AI search introduces two dynamics that make attribution trickier than it already was: query fan-out and agentic commerce. Query fan-out expands the range of source pages used to answer a single user prompt. Agentic commerce lets AI agents take action without users visiting your site at all.

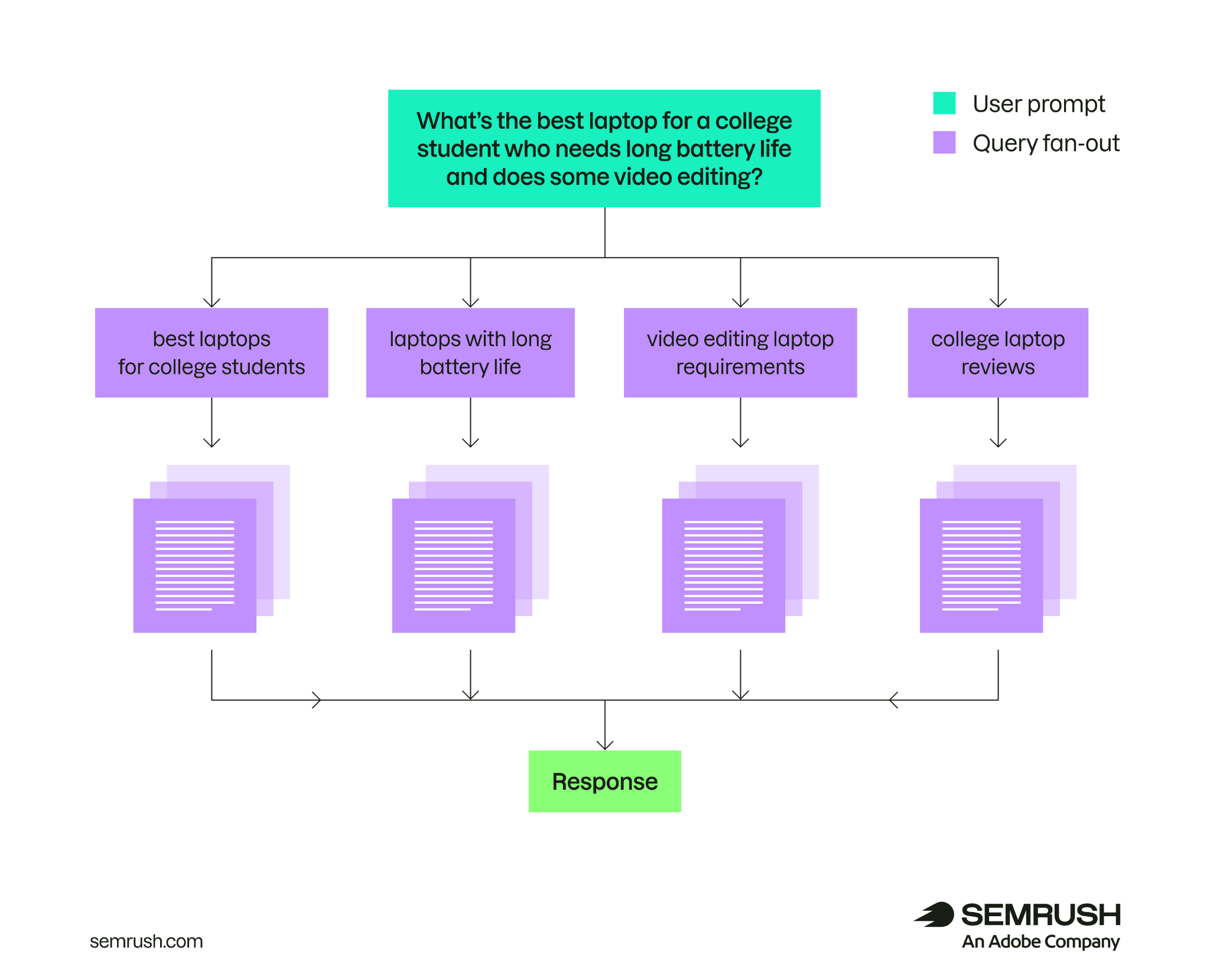

Query fan-out

Query fan-out is a process AI systems use to split user queries into multiple related sub-queries. This allows the AI tool to gather information on relevant topics from multiple sources to give the user a more comprehensive answer.

With query fan-out, multiple source pages contribute to a single response. The user may visit one of those sources, or none of them. The others all influence what the AI says and, by extension, what the user thinks, but receive no traffic, no sessions, and no attribution.

For example, if someone asks ChatGPT about your brand's products, the tool might use query fan-out and return several of your site's pages in its sources. But the user might visit your site directly and make a purchase.

This means you have no visibility on which pages on your site actually influenced the user's action. All you'll see in your analytics is a direct traffic conversion or, at most, a referral that says the user came from ChatGPT.

We'll show you below how to track which pages AI tools are citing so you can close this aspect of the attribution gap.

Further reading: What Is Query Fan-Out & Why Does It Matter?

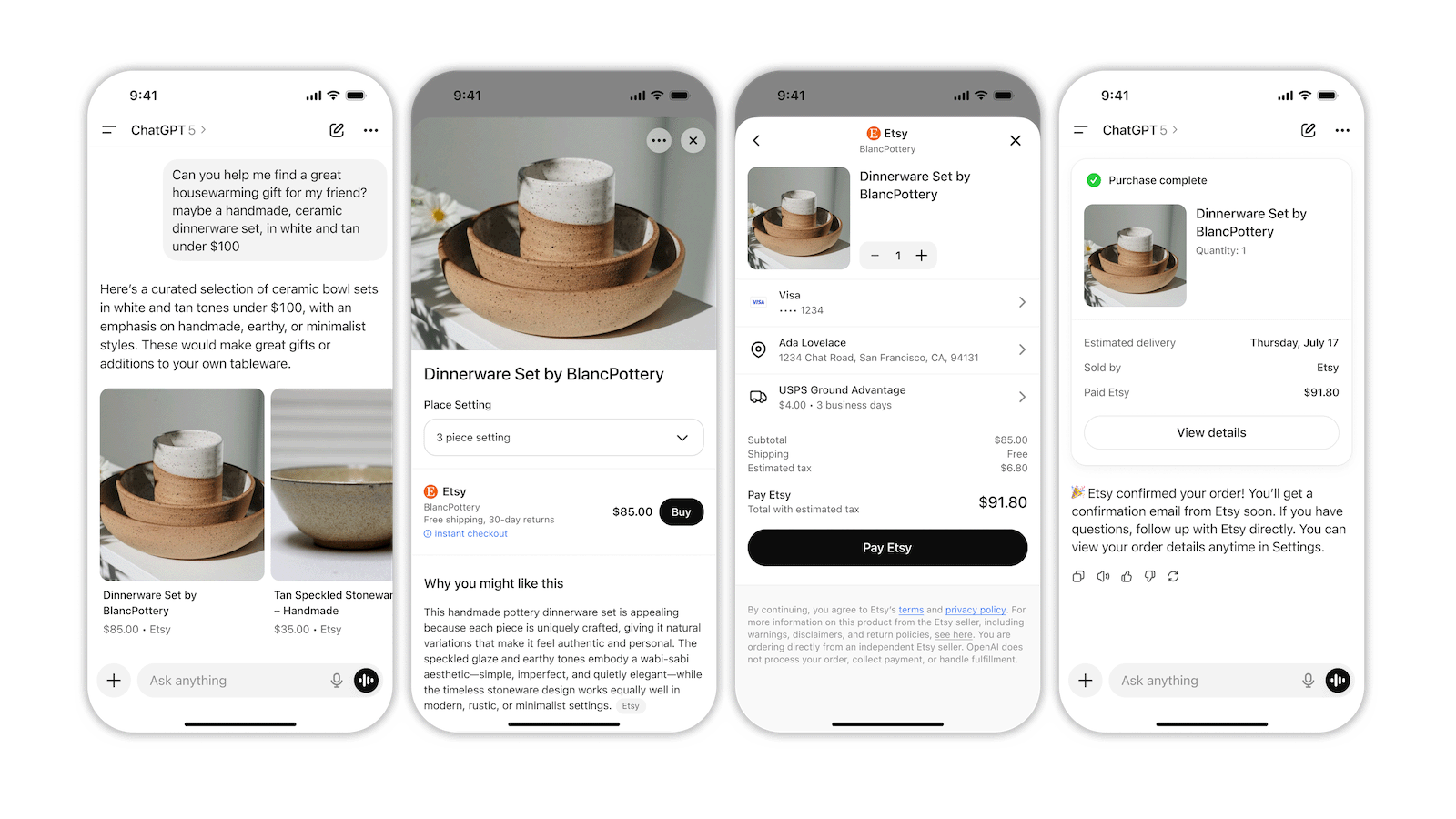

Agentic commerce

AI agents can now browse, compare, and in some cases complete purchases autonomously on a user's behalf.

Image Source: OpenAI

If an agent buys a SaaS subscription or places a product order, the brand never receives a site visit. There's no visibility on the session that led to the transaction.

Agentic commerce is still in its early stages. Platforms are rolling outagentic protocols like ACP, MCP, and A2A to make transactions inside AI tools easier. As these protocols mature, agentic commerce will become a major source of revenue for brands.

This makes it vital to understand how you can close the attribution gap these kinds of agentic search experiences create.

A three-tier measurement framework for the agentic era

You can't close the agentic attribution gap with a single metric or tool. The gap exists across different parts of the buying funnel, and measuring it means tracking signals at each stage.

The framework below moves from the earliest stage of AI influence (whether your content can be found at all) through to real business outcomes (whether AI visibility is driving conversions). Each tier has specific metrics underneath it. Track them alongside your traditional analytics. The metrics here are directional rather than definitive, so cross-reference movements in one against movements in another to build a fuller picture.

Tier 1: Are you eligible to be found?

Tier 1 covers the basics of whether AI tools can find your brand. Before you can appear in an AI-generated answer, your content needs to be crawlable and usable by AI systems. This tier is about making sure you're in consideration at all.

Signals to watch here include:

- Whether AI crawlers like GPTBot, ClaudeBot, and PerplexityBot are accessing your site

- How much of your content is structured clearly enough to be extracted and cited

- Whether your key pages are being indexed by the sources AI tools tend to rely on (e.g., Google and Bing)

You don't need to actively track these signals to gauge attribution. They're the fundamentals that make attribution possible in the first place. Run these checks with a quick AI visibility audit.

For deeper guidance on getting your content into AI answers, see our guide to ranking in AI search.

Tier 2: Are you actually appearing?

Tier 2 measures whether you're being mentioned in AI-generated answers for the queries that matter to your business: how often, on which platforms, and how you compare to rivals.

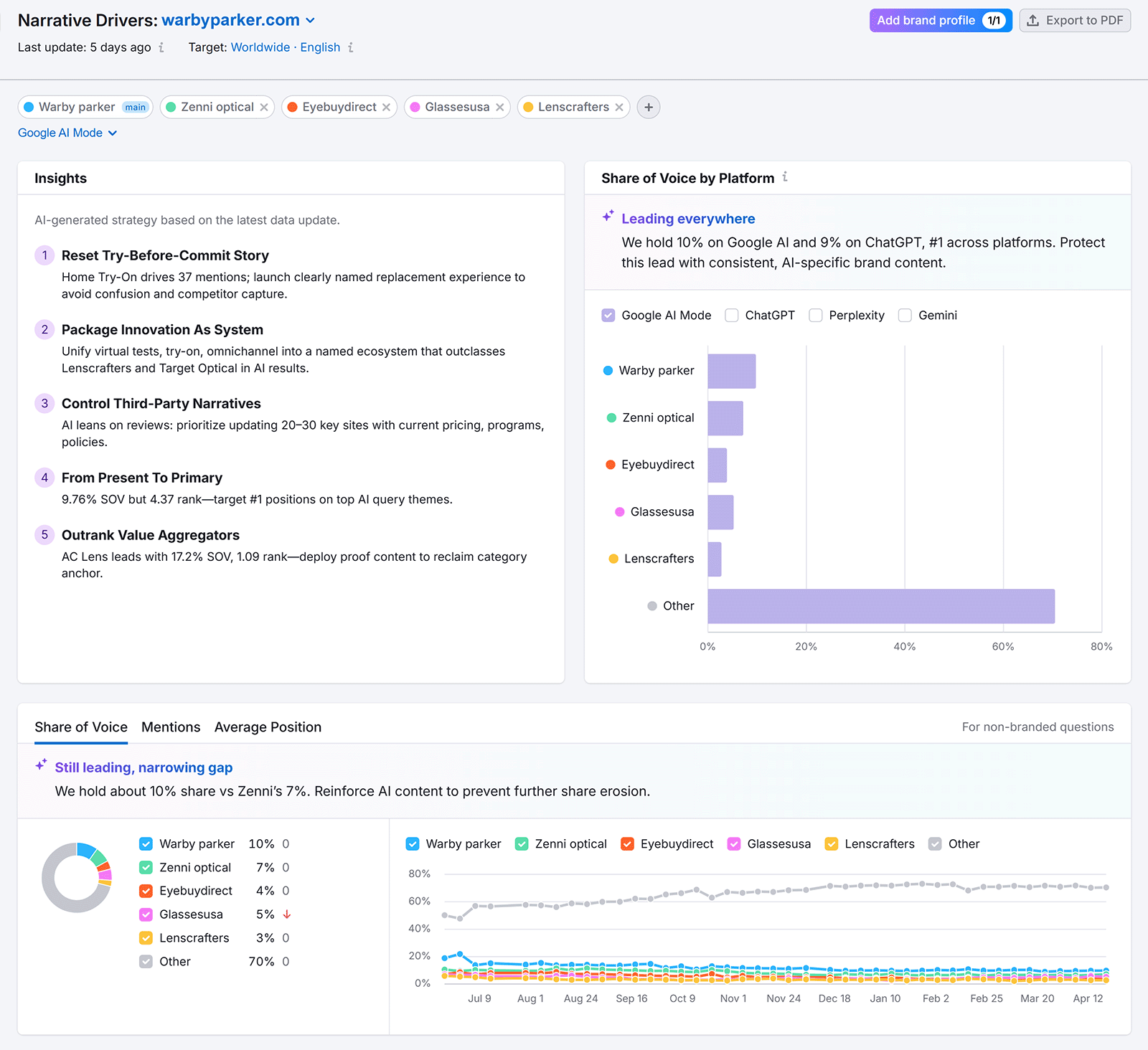

AI share of voice

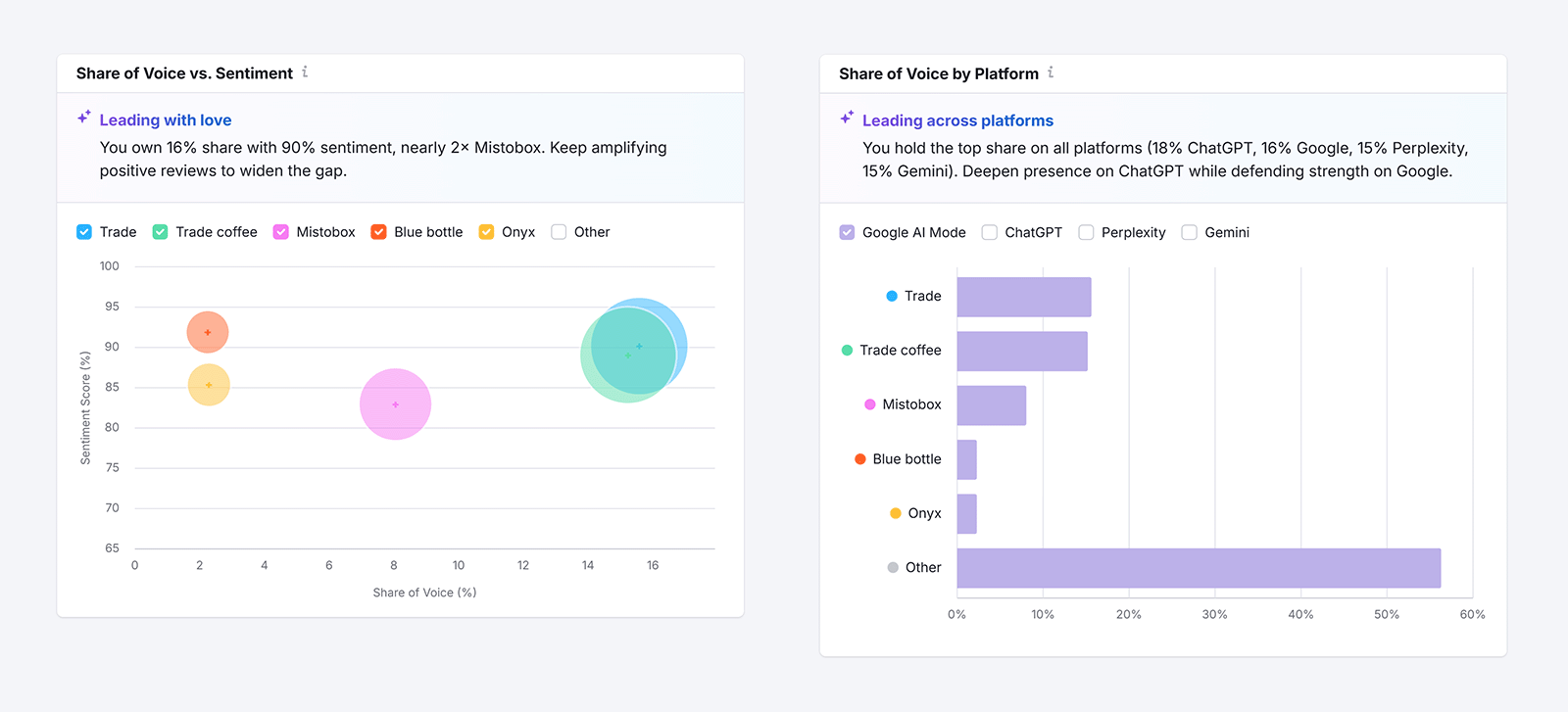

AI share of voice measures what percentage of AI-generated answers for your target queries include your brand, compared to your competitors.

Why this matters for attribution: If your AI share of voice increases, your brand is appearing in more AI-generated answers related to your industry. Track this alongside direct traffic and conversions. If they move together, you have reasonable evidence that AI visibility is influencing real business outcomes.

If your share of voice is flat or shrinking, our guide to why competitors are winning AI search covers the most common reasons.

How to track it: Use Semrush's AI Visibility Toolkit to track your AI share of voice across ChatGPT, Perplexity, Gemini, Google AI Mode, and AI Overviews, over time and against your competitors. You'll find share of voice information in the "Narrative Drivers" tab.

AI citations and mentions

An AI mention means your brand was referenced in a response. A citation means an AI tool included a link back to a specific page on your site. Not every mention includes a citation, and not every citation is for a mention. Some brand mentions include citations for other websites entirely, and some citations point to non-brand information on your site (like an answer to a question or a definition of a concept).

Why this matters for attribution: Citations provide attribution signals when the user clicks the link (see AI referral traffic below). If citations increase alongside conversions from referral or direct traffic, your brand getting cited in more AI responses is driving conversions.

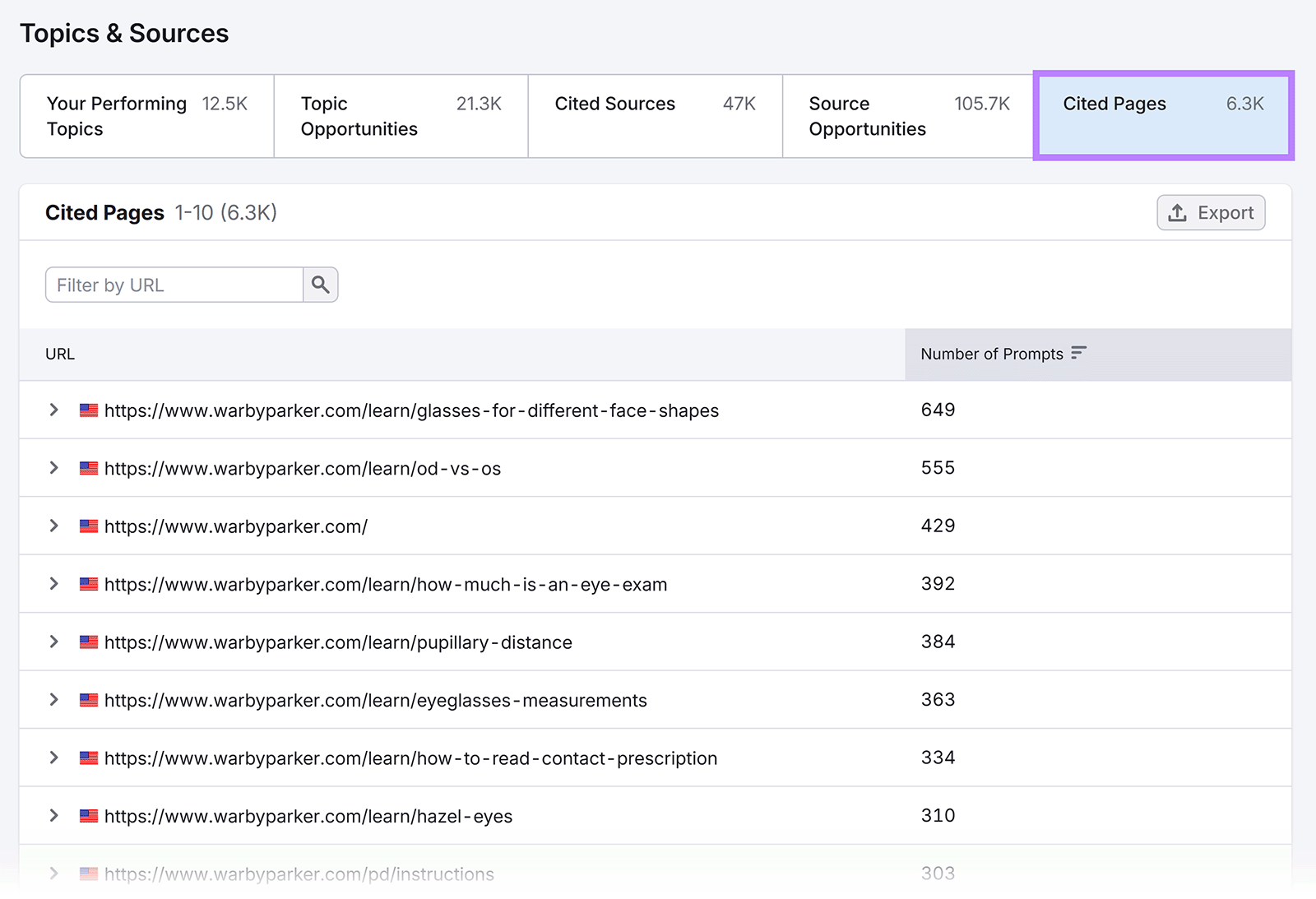

Tracking which specific pages on your site get cited also informs content decisions. If a particular page is being cited frequently, it's worth regularly updating and expanding. If a high-value page is never cited, restructure it to be more easily extracted by AI systems.

See our guide to AI content optimization for more on this.

Brand mentions without a clickable citation still shape what the user thinks about your brand. If you only track citations, you're missing every instance where AI recommended or described you without linking out, which is often most mentions.

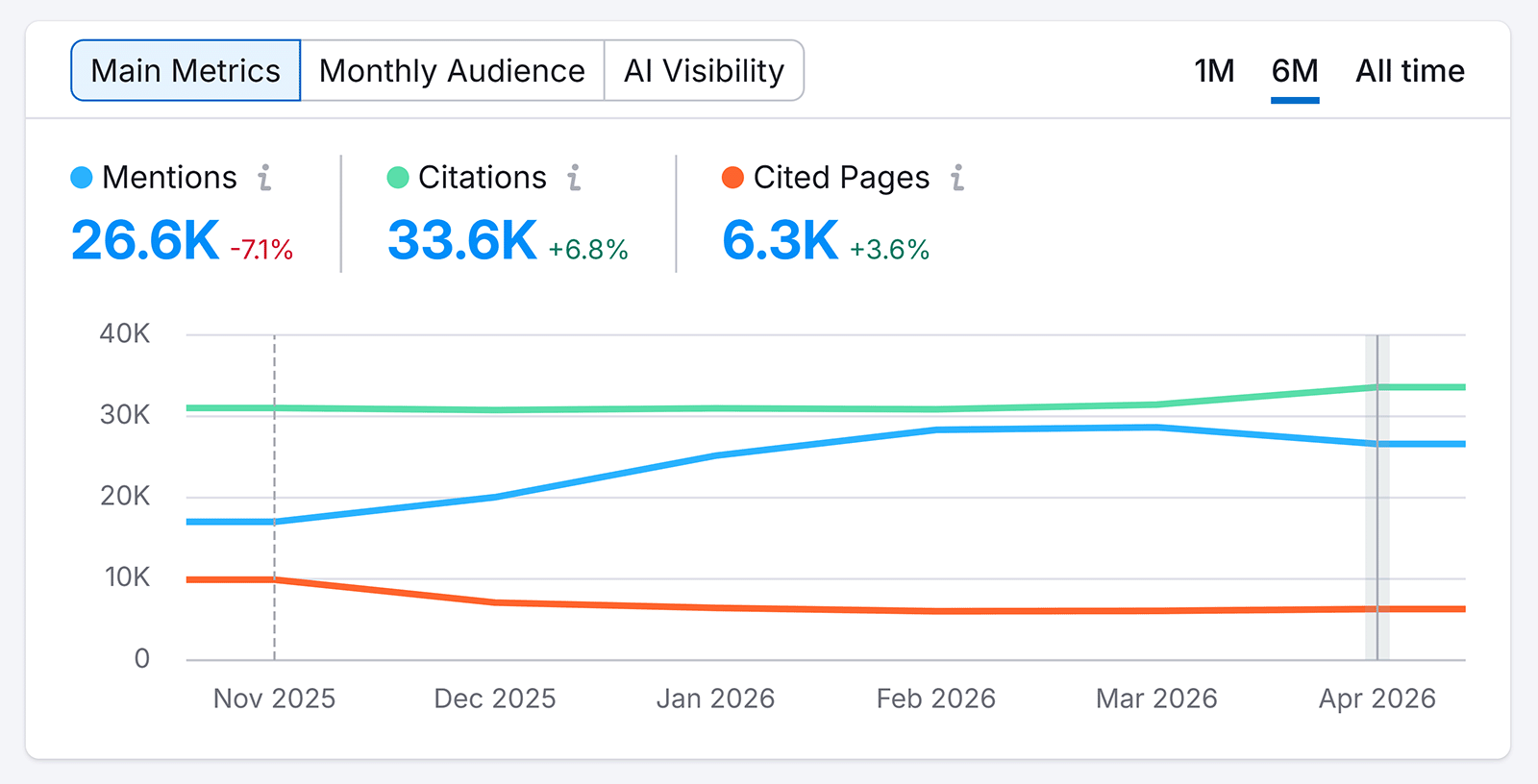

How to track it: Semrush's AI Visibility Toolkit tracks both mentions and citations over time, including which specific pages are being referenced and by which platforms. You'll see this in the "Visibility Overview" tab.

Scroll down and filter by "Cited Pages" to see which pages AI tools are citing. Make sure these pages are up to date and optimized for conversions.

Brand sentiment in AI answers

Brand sentiment measures how AI tools talk about your brand in responses to users. A response might describe your product as "a good option for small teams but limited at enterprise scale," or flag a known complaint from user reviews. Inaccurate or outdated framing turns away buyers before they reach your site.

Monitoring sentiment means regularly checking how AI tools describe your brand when they mention it, and asking:

- Are the descriptions accurate?

- Do they reflect your current product?

- Are there recurring negatives that trace back to an old review or an outdated feature?

Why this matters for attribution: Brand sentiment explains conversion patterns that other metrics can't on their own. If your share of voice increases without a corresponding increase in conversions, cross-analyzing with sentiment fills in the gap. A sentiment analysis might show that AI tools hedge their recommendations for your product, citing limited features or poor reliability compared to rivals. The mentions keep growing, but the negative context blocks conversions.

A handful of strongly positive mentions often drives more conversions than frequent mentions with neutral or mixed framing.

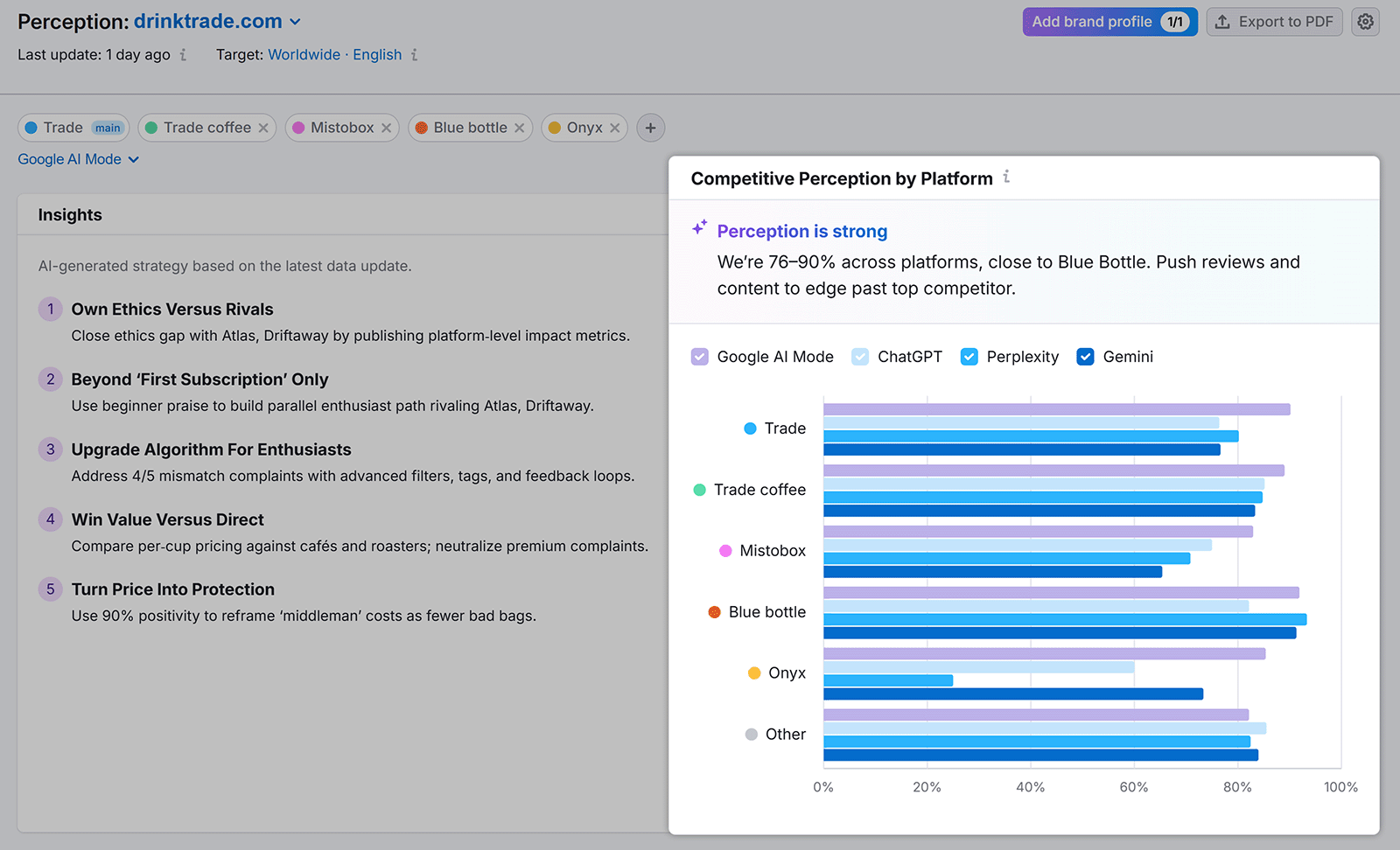

How to track it: Semrush's AI Visibility Toolkit includes a "Perception" report that surfaces how your brand is being characterized across platforms and flags sentiment trends over time. It also shows how this compares to your competitors.

You can track sentiment against share of voice directly within Semrush, which makes cross-analyzing the two metrics easy.

In the example above, Mistobox has a higher share of voice than Blue Bottle, but a much lower sentiment score. That's useful intelligence for Mistobox if they were seeing more AI referrals but no increase in conversions.

Tier 3: Is it driving business outcomes?

Tier 3 connects your AI visibility to your business goals. The signals here are proxies rather than hard attribution, but it's where you start closing the attribution gap and understanding how AI tool use is influencing conversions.

Branded search volume

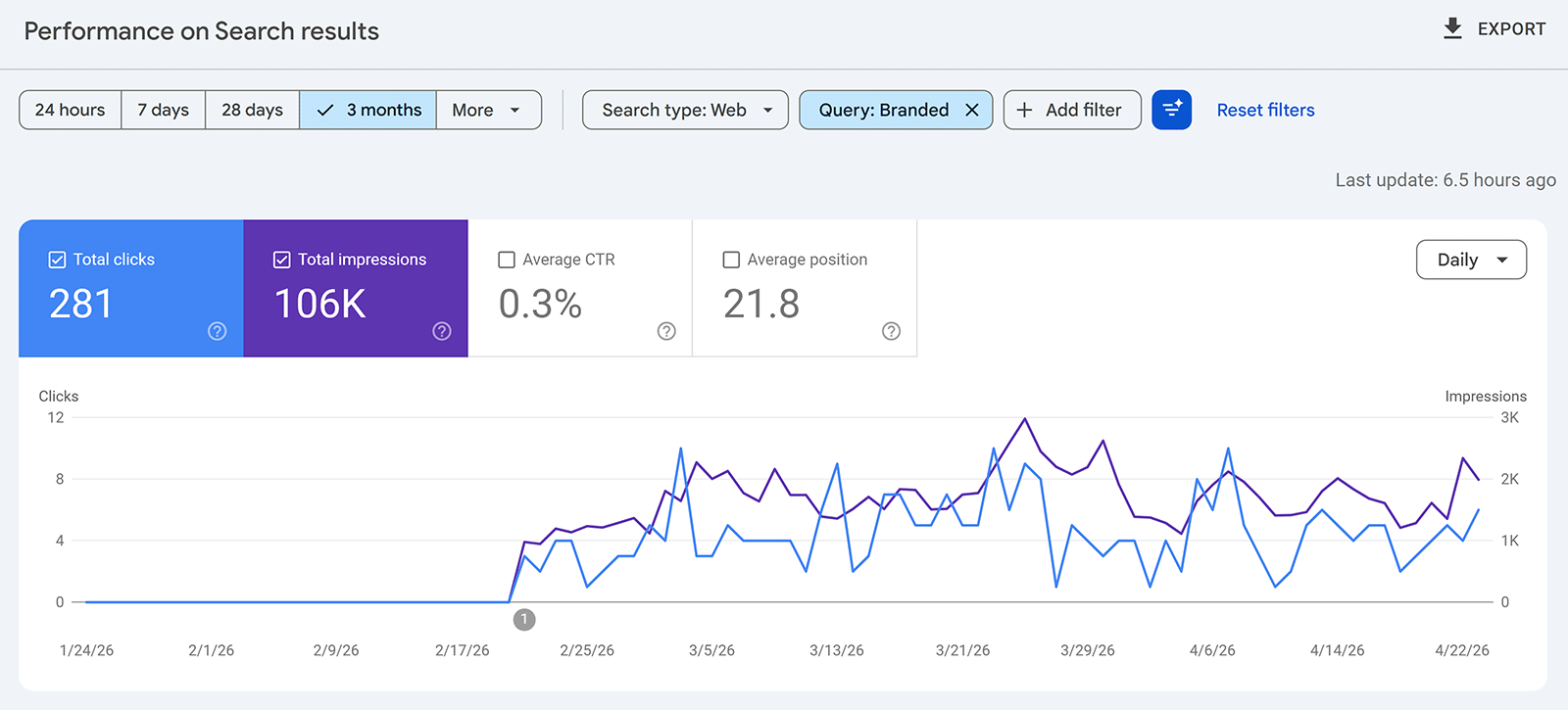

Branded search volume measures how many people are searching for your brand or your products and services directly.

When someone encounters your brand in an AI answer and wants to learn more, they won't always click a citation. They might open a new tab and search your brand name in Google. That search shows up in Google Search Console as a click and in your analytics platform as an organic visit, with no visible connection to the AI interaction that prompted it.

Why this matters for attribution: Tracking branded search volume over time gives you a directional signal. If your AI mentions are increasing and your branded search volume is also rising, that's a reasonable indication that AI visibility is driving awareness and interest.

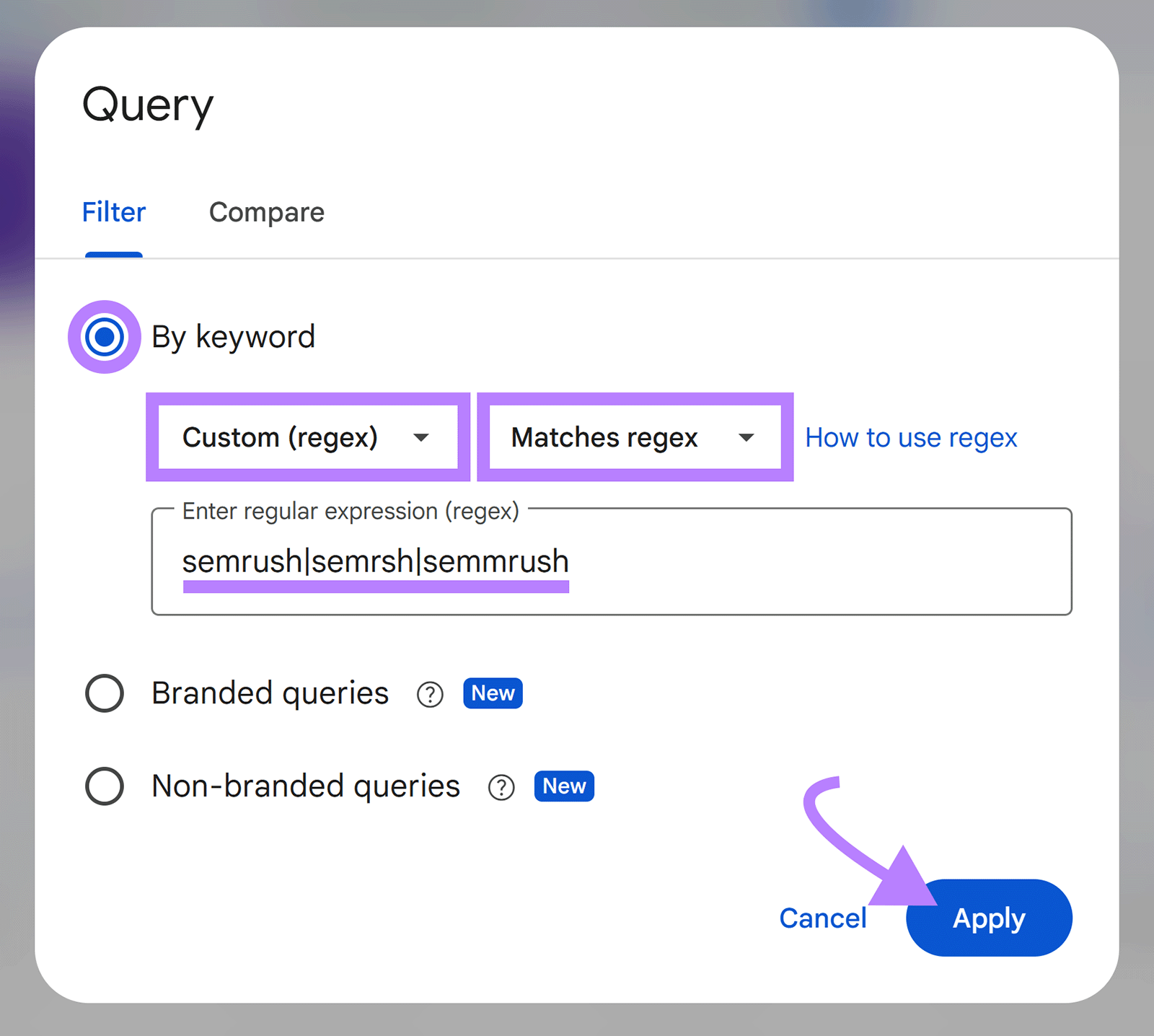

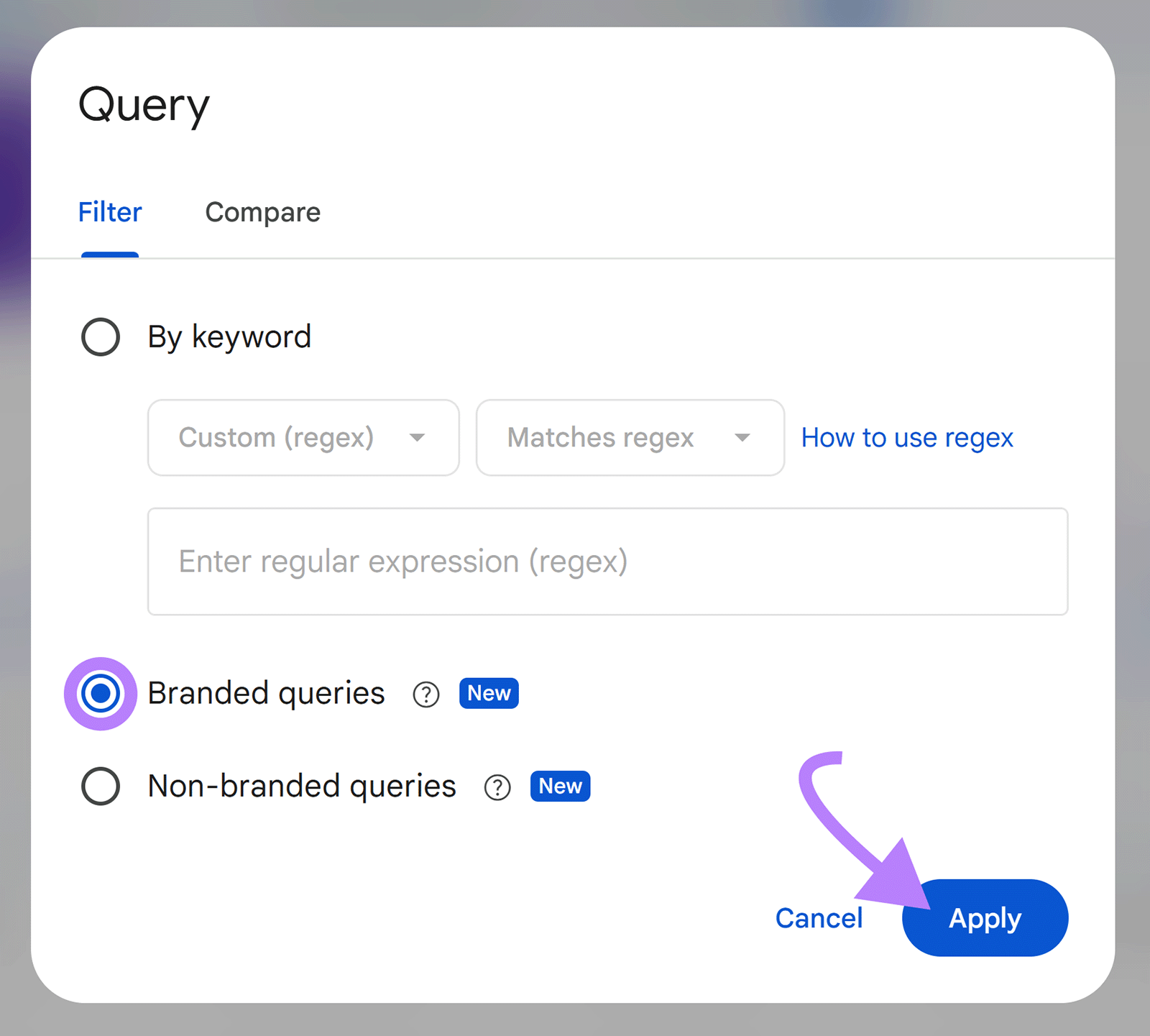

How to track it: Track branded search volume in Google Search Console in the Performance report, filtering queries by your brand name (and common misspellings) and related products. Click "+ Add filter" > "Query" > "Apply."

Google Search Console also rolled out a new "Branded queries" filter that does this for you. It's only available for sites with a sufficient volume of queries and impressions. Google announced the filter in November 2025 and rolled it out to all eligible properties on March 11, 2026.

Track branded search impressions and clicks over time to see whether they correlate with AI visibility changes.

Direct traffic trends

Direct traffic includes visits where a user typed in your URL directly or clicked a bookmark, but it can also include traffic from unknown sources.

Why this matters for attribution: Direct traffic captures visits where the true source is unknown, which increasingly includes AI-influenced visits that don't pass referral data. Tracking how this changes over time gives you a rough proxy for growing AI influence.

How to track it: To estimate how much AI is contributing to your direct traffic, pull your numbers from before AI tools became widely used (around early 2023) and compare them to now. If direct traffic has grown without a corresponding increase in paid spend, email volume, or other known drivers, AI influence is the most likely explanation.

This is a proxy, not a precise measurement. Many factors can account for trends over several years, but it's a useful data point to include in a broader picture.

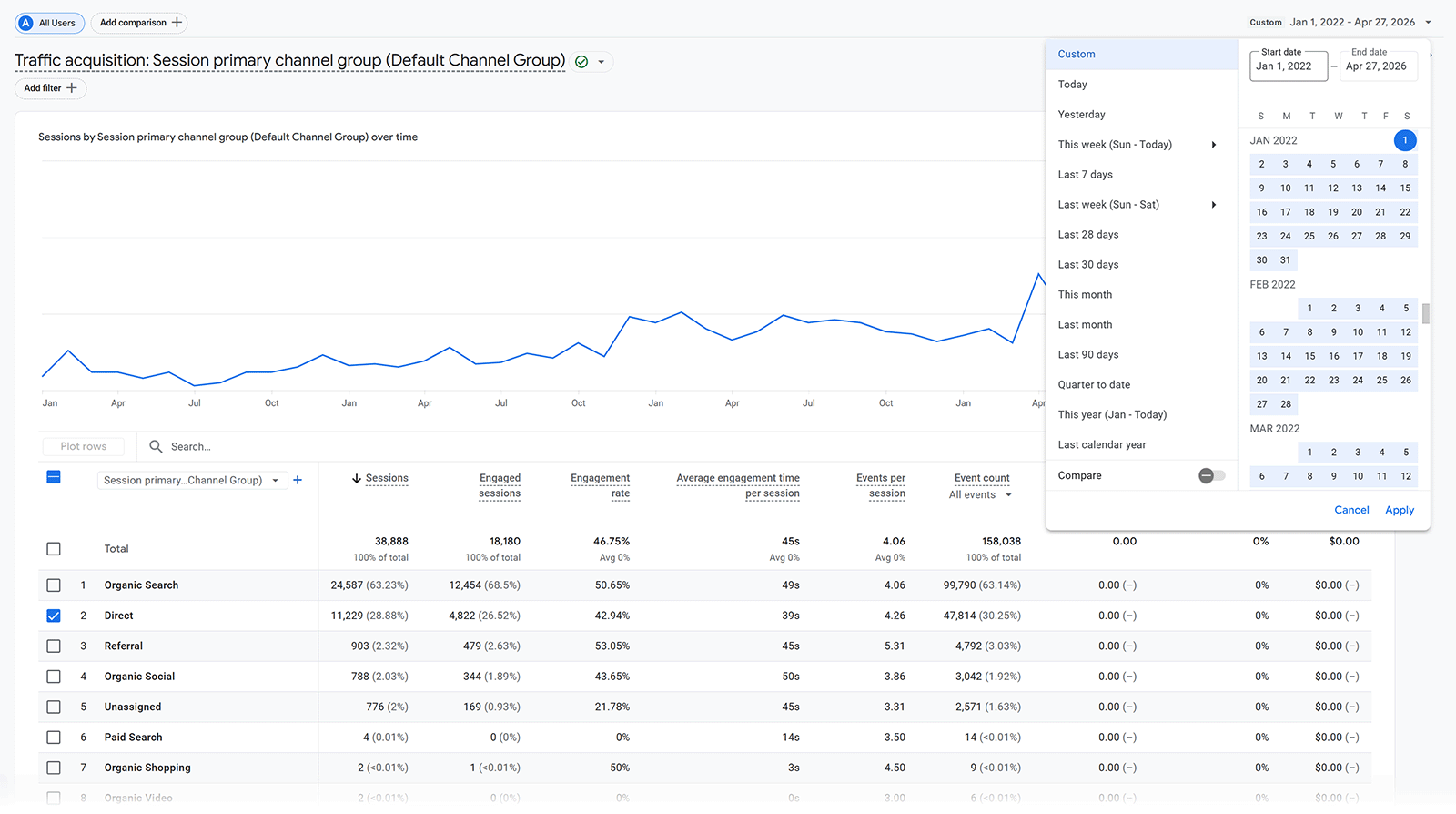

Tracking AI referral traffic in GA4

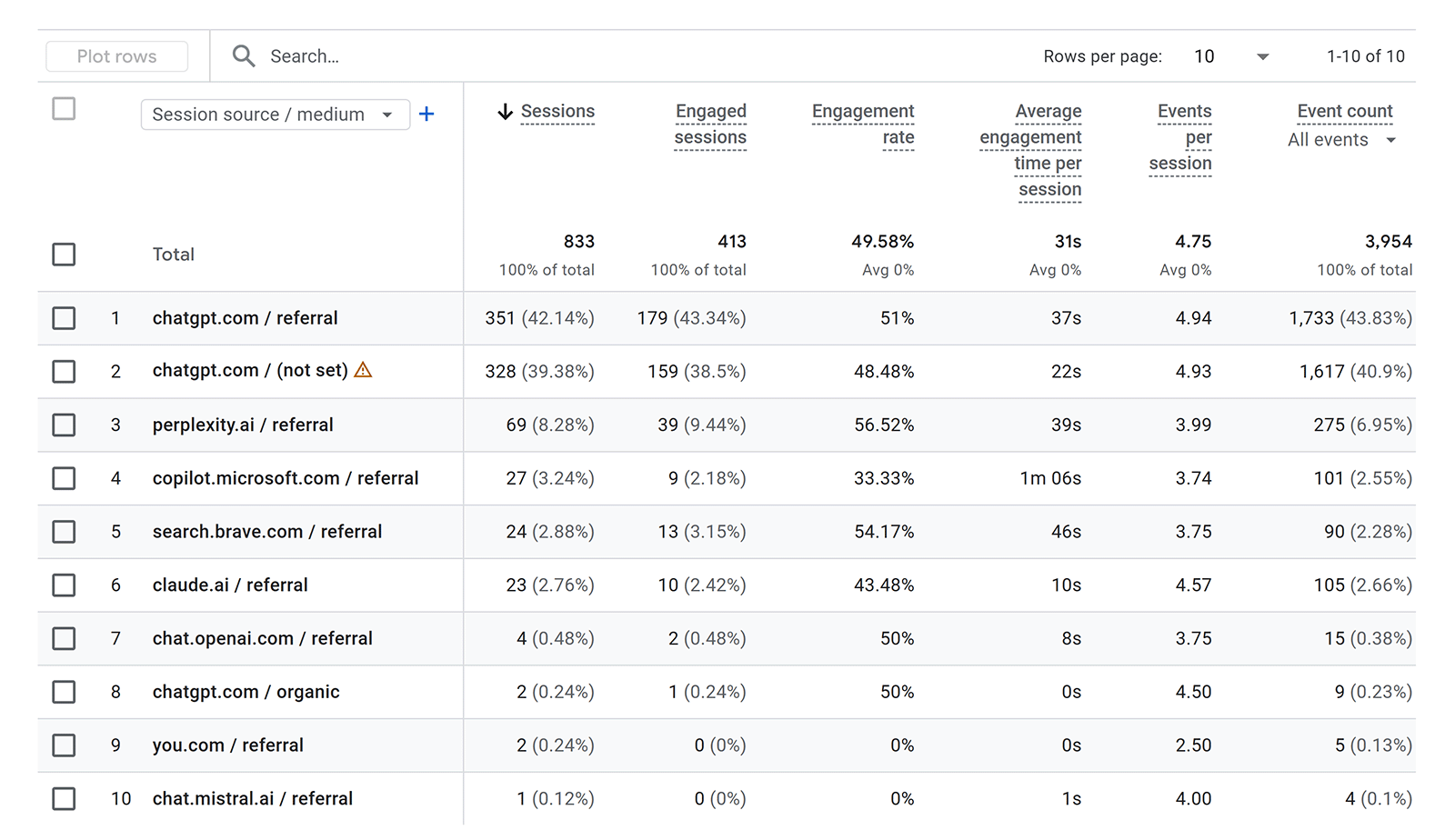

Tracking AI referral traffic in GA4 means catching the AI tool visits that do pass referral data, even if inconsistently — and isolating them from the visits that don't.

Why this matters for attribution: Tracking AI referrals is the closest thing to a direct measurement of AI traffic you currently have. It won't tell you exactly how many users are visiting your site from AI tools, but it's a powerful piece of directional data.

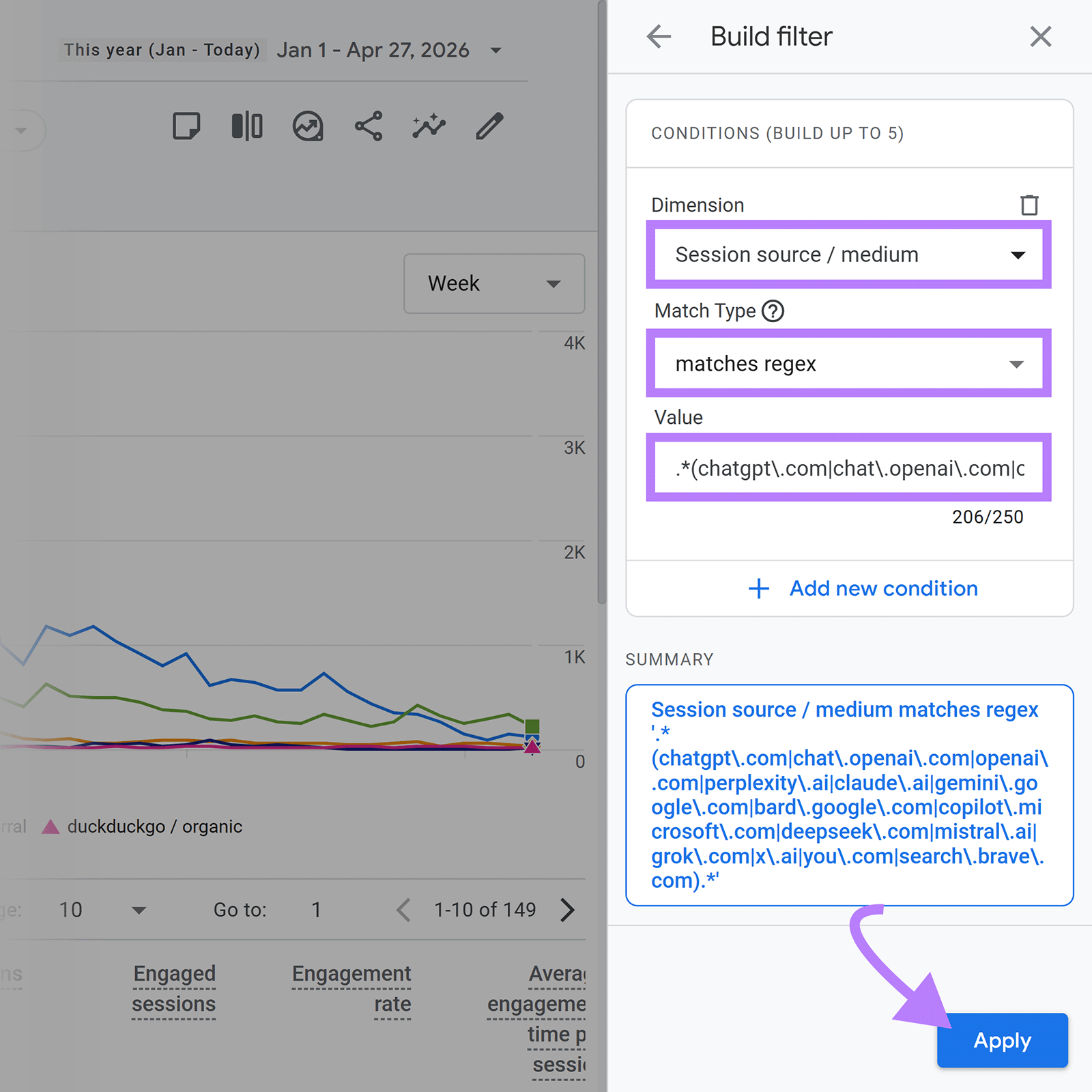

How to track it: In Google Analytics 4, go to "Reports" > "Acquisition" > "Traffic acquisition." Click "Add filter +" and set the Dimension to "Session source/medium" with the Match Type as "matches regex." Add this as the Value:

.*(chatgpt\.com|chat\.openai\.com|openai\.com|perplexity\.ai|claude\.ai|gemini\.google\.com|bard\.google\.com|copilot\.microsoft\.com|deepseek\.com|mistral\.ai|grok\.com|x\.ai|you\.com|search\.brave\.com).*

This captures referral traffic from the major AI platforms that do pass source data, although some, like ChatGPT Atlas, may mask their referrers and show up as direct traffic.

Self-reported attribution

Directly asking customers how they found you is a valuable way to gauge how AI is influencing purchase decisions.

Why this matters for attribution: This is the only metric that captures the user's own account of how they found you. It's imperfect, but it surfaces AI as a discovery channel where every other signal misses it entirely.

How to track it: Add a single optional question to your lead form, checkout flow, or post-purchase survey. Use something like "How did you first hear about us?", with options that include ChatGPT, Perplexity, Google AI, and other AI tools alongside traditional channels.

Response rates vary, people don't always remember accurately, and you need a reasonable volume of responses before patterns become meaningful. But the answers you do collect are cheap to gather and hard to get any other way.

A 90-day plan to close the attribution gap

You won't close the agentic attribution gap completely, but you can get a much clearer picture than most teams currently have. The framework above gives you the metrics; the sequence below gives you the order to roll them out.

Days 1-30: Establish your baseline

Before you can measure influence, you need to know where you're starting from.

- Set up the GA4 AI referral regex filter and pull a 90-day baseline for direct traffic and AI referrals

- Pull your branded search baseline in Google Search Console (or apply the new Branded queries filter if your site qualifies)

- Connect Semrush's AI Visibility Toolkit and let it run for at least two weeks to populate share of voice, mentions, and sentiment data

- Add a "How did you first hear about us?" question to one of your forms (start with the lowest-friction surface, like a post-purchase survey, rather than a checkout field)

Days 31-60: Find the patterns

With baselines in place, look for the cohorts most likely influenced by AI.

- Segment your direct and AI referral traffic by landing page, device type, and conversion rate. Pages with unexplained direct traffic spikes are your prime candidates for AI influence.

- Cross-reference the pages your AI Visibility Toolkit report flags as "cited pages" against your traffic and conversion data. If a cited page is also seeing direct traffic growth, you've found a pattern.

- Compare your AI share of voice against your sentiment scores. A high SoV with low sentiment is a different problem than a low SoV with high sentiment, and the fix is different in each case.

- Start collecting self-reported attribution responses and tag them by channel

Days 61-90: Reframe how you report

Pattern data only matters if it changes how decisions get made. This is where the reporting work happens.

If organic traffic is declining but sales are steady, and that's all you report to leadership, it'll look like there's a problem. If you also report that branded search volume is growing, direct traffic conversion rates are improving, and AI share of voice is climbing, leadership sees that your AI optimization efforts are working.

Build a simple monthly dashboard that shows the four signals together: organic traffic, branded search, direct traffic conversion rate, and AI share of voice. Frame the story explicitly: "Here's what's growing, here's why it's growing, and here's what we'd be missing if we only tracked organic." That's how you close the attribution gap inside your organization, not just in your analytics.

Build the measurement infrastructure now

The brands that figure out AI search attribution in the next 12 months will set the playbook the rest of the industry copies. The brands that wait will spend the next two years explaining to leadership why a black box is shrinking their organic numbers without a clear story for what's filling the gap.

The framework in this guide is a starting point, not a finish line. Treat it like the early days of multi-touch attribution: imperfect, evolving, but the people who built measurement habits early were the ones who shaped how their orgs invested when budgets followed.

Semrush's AI Visibility Toolkit tracks your brand's presence across ChatGPT, Perplexity, Gemini, Google AI Mode, and AI Overviews. It covers share of voice, mentions, citations, cited pages, and sentiment. Start a 7-day free trial to set your baseline this week.